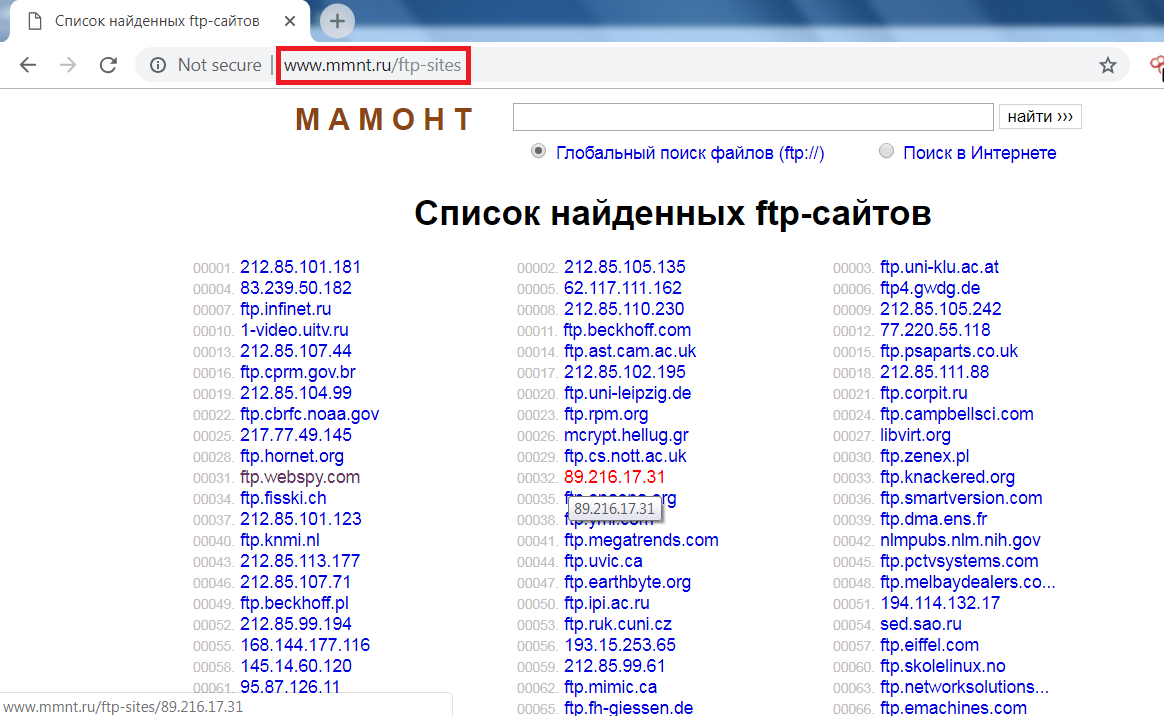

However, the returned results can be worth the effort. The process takes some time to get used to. Writing Google Dorks is not a straightforward process like the simple search query entered on Google's main page. This article will give examples of advanced Google Dorks that can help Open Source Intelligence (OSINT) gatherers and penetration testers locate exposed files containing sensitive information. Some of the more popular examples are finding specific versions of vulnerable web applications. Google Dorking involves using advanced operators in the Google search engine to locate specific text strings within search results. It is also useful for retrieving hidden information not easily accessible by the public. It uses advanced Google search operators to find security holes in the configuration and code that websites use.

The bots get into the spider trap via a hidden link and index an infinite amount of nonsense content.Google hacking, also known as Google Dorking, is a computer hacking technique. Website operators employ the malicious bots with spidertraps.Ī pre-processor can create an infinite number of Lorem Ipsum web pages. They snoop around on every website they can somehow find (link, manual entry).

Prevent your website / subdomain from being kept secret.īesides the good bots, there are also the bad bots. To do this, they collect the links available on a collection of startup websites. Strictly separate public content and internal content through different (sub)domains and content management systems.Ĭrawlers can only visit websites they know. Servers behind a firewall, which can only be reached via VPN, prevent a bot from accidentally becoming aware of the website. Many bots honor these, but the no-index meta tag tells a crawler not to include this in its collection.Īny form of authentication prevents crawlers from coming to certain websites. The robots.txt no longer corresponds to the latest standard. The bots read the robots.txt to know which web pages to index and which ones to skip. It is intended to help the “benign” bots. The robots.txt is located in the root directory of the website (next to the index.html / index.php). The best protection is not to get indexed. Protection against indexing / Google hacking The software assumes that the website operators always appear the websites as quickly as possible in the Google rankings. Google indexes all websites that are not excluded because the search engine wants to deliver the best possible results for a search term. They help companies and inform them about bugs, data leaks or privacy violations. On the other hand, a hacker can misuse the same tool to get information about a victim website that hackers can use to hack into the systems. On the one hand, you can use a search engine to research your thesis. Anyone can buy a sharp knife with a blade length of 26 cm in the supermarket to cook with or to stab people with. …then the government would have to ban kitchen knives as well. Politicians quickly come up with the idea of banning these tools… intitle:search for a search term that must appear in the title of the website.Google stores the page for a while even after the website has been deleted by the administrator. filetype:pdfonly searches for PDF documents.site:lists all websites that Google has indexed to a domain.Only if this is explicitly forbidden does the crawler stick to it. The big downside is that Google “by default” assumes that every webpage should be indexed. Rule- compliant crawlers only visit websites that website operators have not “excluded”. Some website operators are careless and leave websites on their server without effective indexing protection. How can hackers use search engines for their purposes? Tutorial Vulnerability Search / Google Hacking The more backlinks the website has, the higher this website will appear in the ranking. Websites with “good” content have many links from other domains that point to the domain. Ranking according to Larry Page “Page rank” is an algorithm that determines the order of the web pages for a search term. The crawler avoids indexing the same pages again. The crawler creates a big data database from the websites it finds. The links lead to new websites with new content. What is a crawler?Ī crawler is a computer program that automatically visits websites on the Internet, saves them and extracts the links from the website. The search engine saves the text (HTML, PDF, TXT) and content (images, videos) of a website and ranks the content for possible suitable search terms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed